By Sanjay Gupta, Co-founder & President, Velaura AI

Power Is a Limiting Factor for AI Growth

Artificial intelligence has entered a new phase of maturity, one defined not only by the availability of algorithms or silicon innovation, but also by power. Global AI-related electricity demand is projected to more than double to over 945 TWh/year by 2030 (IEA Report), placing enormous pressure on data center capacity, power delivery, and grid infrastructure.

Every watt consumed by an accelerator, whether a GPU, TPU, NPU, or custom XPU, carries additional infrastructure overhead. In most production data centers today, Power Usage Effectiveness (PUE) is around 1.5. That means for every watt delivered to IT equipment, roughly an additional 0.5 watts is consumed by cooling and infrastructure overhead.

One of the most powerful levers is not outside the chip. It is inside it.

Reducing power consumption at the silicon level, where computation actually happens, has a cascading effect. It lowers cooling requirements, enables higher rack density, reduces capital expenditures, and improves environmental sustainability. In short, power efficiency at the chip level is one of the most impactful strategies for lowering total cost of ownership and enabling the next phase of AI scale. At scale, this multiplier effect turns every watt saved at the silicon level into disproportionately larger system-level savings.

Opportunity to Reduce AI XPU Power Consumed by up to 2x: Matrix Multiplication and Low Voltage Technology as Key Levers

Inside a modern AI accelerator, power is not evenly distributed.

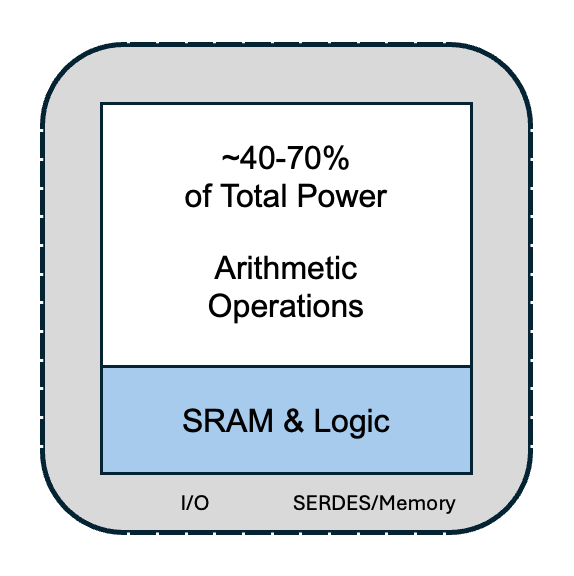

Across AI XPUs, depending on workload, approximately 40 to 70 percent of power is consumed by matrix multiplication (MATMUL) and other arithmetic operations, including multiply-accumulate (MAC) units, accumulators, and activation functions. The remaining 30 to 60 percent is largely driven by memory access and data movement. This concentration of power in compute blocks makes MATMUL the primary target for efficiency gains.

Typical XPU chip

The dynamic power consumed by CMOS logic in a typical XPU is described by the following equation:

P = α · C · V² · f

Where α is the switching activity, C is capacitance, V is supply voltage, and f is operating frequency.

Power scales with the square of voltage. Frequency, by contrast, scales roughly linearly. This asymmetry creates a major opportunity. Most AI accelerators today operate at nominal voltages around 700 to 800 millivolts in typical semiconductor chip designs.

At Velaura AI, we have developed a breakthrough design and IP platform, which we call Titan Core™, that enables operation at lower voltages in high-performance AI silicon at the leading semiconductor 3nm and 2nm process nodes. Importantly, Titan Core maintains performance, yield, and reliability at reduced voltages – parameters that would otherwise degrade significantly.

Applying this low-voltage technology to MATMUL blocks repeated across the AI XPU, we have demonstrated a power reduction of 2-4x in the compute blocks while maintaining iso-performance (same performance). At the XPU level, this translates to up to 2x improvement in power consumed.

Challenges of Ultra-Low-Power Technology

Operating at low voltages poses significant challenges for semiconductor design, especially at the latest process nodes. These include:

- Increased susceptibility to soft errors from neutron and alpha particles, due to reduced critical charge at low voltages, particularly impacting state elements and SRAM arrays.

- The need for Electronic Design Automation (EDA) tools and flows for low voltage operation.

- Significant frequency degradation at reduced voltage, which requires custom digital design libraries and circuit design techniques to maintain frequency and yield.

- Back-end chip design flows to maintain high yield and reliability while maximizing area density.

At Velaura AI, we have been perfecting the approach to address these challenges over the past 4 years across multiple leading-edge tape-outs. We utilize proprietary hardened logic and design-level error correction to mitigate the increased susceptibility to soft errors inherent at 300-500mV. Our technology and learnings have been proven with high reliability and yield across more than 30 million ASICs in high-volume, real world environments.

The Economics: What This Means in Real Dollars

Efficiency gains are only meaningful if they translate into tangible economic value. Ultra-low-power compute does exactly that.

Consider a typical example. Take a 1,000-watt XPU operating continuously in an AI data center with electricity costs at $0.10 per kilowatt-hour. A 2-4x reduction in MATMUL power, representing approximately 50% of the total chip power, translates to an efficiency improvement of 250 – 500 watts. The direct IT-level energy savings over three years range from roughly $650 to $1,300 per accelerator. At an AI data center level deploying 100,000 XPUs this translates to $65-130 million in direct energy cost savings.

This, however, is only part of the story. In power-constrained environments, this effectively converts efficiency gains into additional deployable compute capacity, often much more valuable than the energy savings themselves.

When accounting for cooling and infrastructure overhead with a PUE of approximately 1.5, the facility-level savings increase substantially. Additional benefits include higher rack density without thermal overload, deferred or reduced data-center expansion, lower capital expenditure on power delivery and cooling, and improved deployment flexibility in power-constrained regions. In addition, this allows a data center to deploy a larger number of XPUs without needing infrastructure investment in a new facility.

The Path Forward: Pragmatic, Proven, and Scalable

AI’s next efficiency leap will come from disciplined ultra-low-power design grounded in breakthrough techniques already validated in high-volume silicon across multiple tape-outs and process nodes.

By embracing specialized digital design, structured MATMUL fabrics, voltage-optimized standard-cell libraries, and EDA flows explicitly optimized for low-voltage reliability, the industry can deliver significantly more AI compute per watt and per dollar.

This approach does not require abandoning existing software ecosystems or retraining models. It integrates seamlessly into the AI accelerator roadmap and targets one of the largest sources of power consumption where it matters most.

At Velaura AI, we believe ultra-low-power technology represents one of the most high-impact, pragmatic and scalable paths to sustainable AI. As power becomes the defining constraint of the AI era, efficiency at the silicon level will determine who can continue to scale and who cannot.

The future of AI compute will be written not just by TFLOPS, but by watts per TFLOP.

For more information: